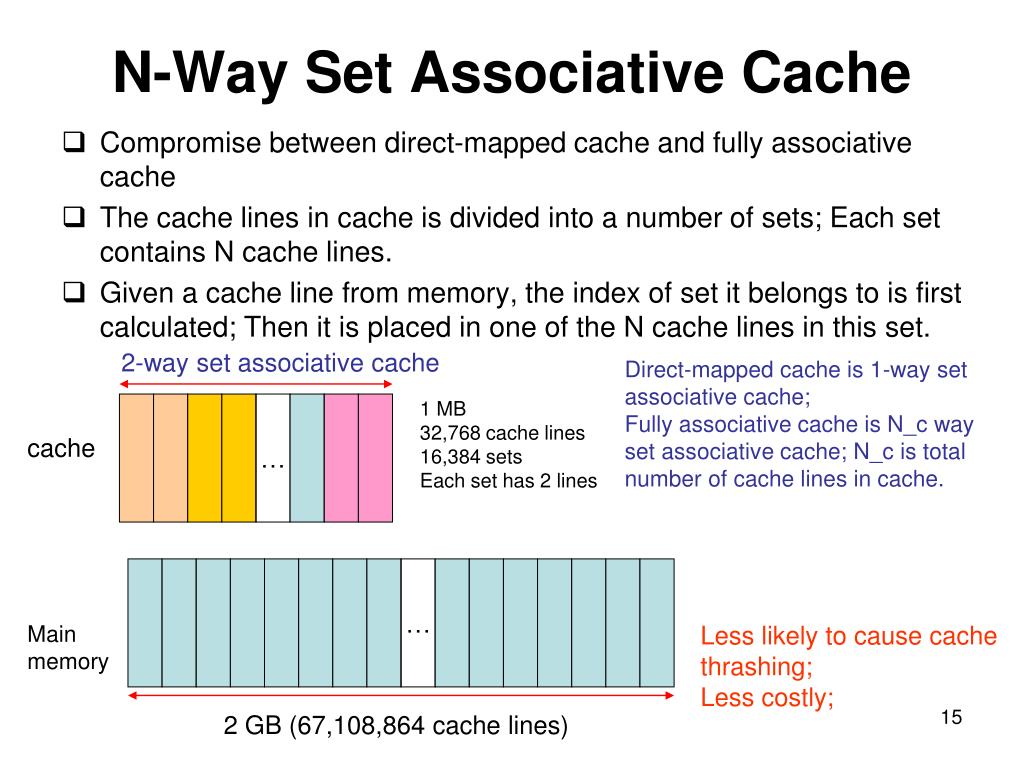

If someone could help me through this example I'd really appreciate it. The key can be the set/line value and the value can be a vector of pairs of tag and access counter. This significantly reduces the likelihood of the cache thrashing seen with direct mapped caches, improving program execution speed and giving more deterministic. However this all feels really wrong to me and I feel as though the three different styles of cache should have different miss rates. 1st step All steps Final answer Step 1/2 Heres some advice for implementing your cache simulator: Unordered Map: You can use an unorderedmap to store the cache data. My understanding is that for all three options accessing A essentially has a 1/16 miss rate and B will miss 1/2 of the time because despite its suboptimal access pattern its able to retain B accesses for one cycle due to the size of memory. N-way Set Associative Cache N directed mapped caches operate in parallel. In a direct mapped cache, there is only one entry in the cache that could possibly have a matching block. If I want to calculate the number of cache misses which occur running it with a direct mapped cache vs a fully associative cache vs a 16 way set associative cache I don't understand how they will actually behave differently. Direct Mapped Cache For a 2N byte cache, the uppermost (32 - N) bits are the cache tag the lowest M bits are the byte select (o set) bits where the block size is 2M. For example suppose I have a cache of 2 MB with a blocksize of 64 bytes and I run the following loop. While I understand the idea that a direct mapped cache must directly map and thereby it gets additional conflict misses I don't really see how to predict this in a practical example. There is also a 2015 edition of this course freely available on youtube.I've been trying to review caches recently and I find myself struggling to understand the difference between these two types of cache when applied in a practical sense. In addition to other stuff it contains 3 lectures about memory hierarchy and cache implementations.

I would highly recommend a 2011 course by UC Berkeley, "Computer Science 61C", available on Archive. N-way set associative cache pretty much solves the problem of temporal locality and not that complex to be used in practice. The number of "ways" is usually small, for example in Intel Nehalem CPU there are 4-way (L1i), 8-way (L1d, L2) and 16-way (元) sets. Sets are directly mapped, and within itself are fully associative. We are talking about a few dozen entries at most.Įven L1i and L1d caches are bigger and require a combined approach: a cache is divided into sets, and each set consists of "ways". Usually approximation of LRU ( least recently used) is implemented, but it is also adds additional comparators and transistors into the scheme and of course consumes some time.įully associative caches are practical for small caches (for instance, the TLB caches on some Intel processors are fully associative) but those caches are small, really small. Besides in order to maintain temporal locality, it must have an eviction policy. In order to check if a particular address is in the cache, it has to compare all current entries (the tags to be exact). Even if the cache is big and contains many stale entries, it can't simply evict those, because the position within cache is predetermined by the address.įull Associative Cache is much more complex, and it allows to store an address into any entry. In associative mapping, any place in the cache is available for each block in the main memory. A major drawback when using DM cache is called a conflict miss, when two different addresses correspond to one entry in the cache. This should make it clear that the complexity of implementing a set associative cache versus a direct mapped cache is related directly to the hardware that. In direct mapping, only one possible place in the cache is available for each block in the main memory. Given any address, it is easy to identify the single entry in cache, where it can be. Read Also: What is BGA (Ball Grid Array) Types, Works & More 13. On the other hand, a fully associative cache is not so easy to build due to its higher hardware requirements which also add to the latency. The alternative to set-associative caching is called direct mapping it gives the processor less freedom on where to put things. These are two different ways of organizing a cache (another one would be n-way set associative, which combines both, and most often used in real world CPU).ĭirect-Mapped Cache is simplier (requires just one comparator and one multiplexer), as a result is cheaper and works faster. A direct mapped cache is much easier to build since it has pretty low hardware requirements. Set-associative cache can be anywhere from 2 sets to eight sets wide. In short you have basically answered your question.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed